Observe ZDNET: Add us as a most well-liked supply on Google.

ZDNET’s key takeaways

- Anthropic up to date its AI coaching coverage.

- Customers can now choose in to having their chats used for coaching.

- This deviates from Anthropic’s earlier stance.

Anthropic has grow to be a number one AI lab, with one in every of its largest attracts being its strict place on prioritizing shopper information privateness. From the onset of Claude, its chatbot, Anthropic took a stern stance about not utilizing consumer information to coach its fashions, deviating from a typical business follow. That is now altering.

Customers can now choose into having their information used to coach the Anthropic fashions additional, the corporate mentioned in a weblog submit updating its shopper phrases and privateness coverage. The information collected is supposed to assist enhance the fashions, making them safer and extra clever, the corporate mentioned within the submit.

Additionally: Anthropic’s Claude Chrome browser extension rolls out – get early entry

Whereas this modification does mark as a pointy pivot from the corporate’s typical method, customers will nonetheless have the choice to maintain their chats out of coaching. Maintain studying to learn the way.

Who does the change have an effect on?

Earlier than I get into flip it off, it’s value noting that not all plans are impacted. Industrial plans, together with Claude for Work, Claude Gov, Claude for Training, and API utilization, stay unchanged, even when accessed by third events by way of cloud companies like Amazon Bedrock and Google Cloud’s Vertex AI.

The updates apply to Claude Free, Professional, and Max plans, that means that in case you are a person consumer, you’ll now be topic to the Updates to Client Phrases and Insurance policies and can be given the choice to choose in or out of coaching.

How do you choose out?

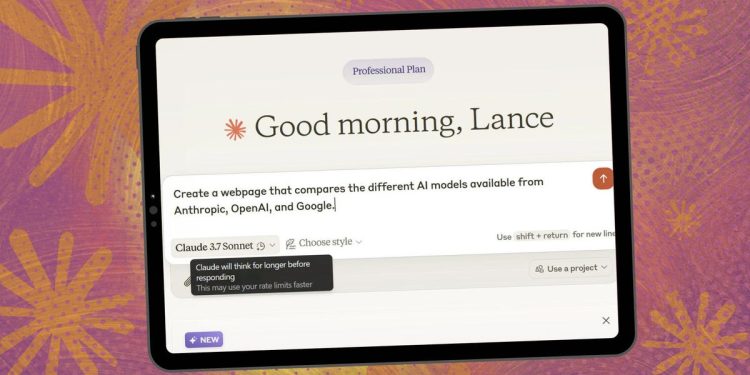

In case you are an current consumer, you’ll be proven a pop-up just like the one proven under, asking you to choose in or out of getting your chats and coding periods skilled to enhance Anthropic AI fashions. When the pop-up comes up, be certain that to really learn it as a result of the bolded heading of the toggle is not easy — somewhat, it says “You’ll be able to assist enhance Claude,” referring to the coaching characteristic. Anthropic does make clear beneath that in a bolded assertion.

You may have till Sept. 28 to make the choice, and when you do, it’s going to routinely take impact in your account. When you select to have your information skilled on, Anthropic will solely use new or resumed chats and coding periods, not previous ones. After Sept. 28, you’ll have to resolve on the mannequin coaching preferences to maintain utilizing Claude. The choice you make is at all times reversible through Privateness Settings at any time.

Additionally: OpenAI and Anthropic evaluated every others’ fashions – which of them got here out on high

New customers can have the choice to pick the desire as they join. As talked about earlier than, it’s value holding a detailed have a look at the verbiage when signing up, as it’s prone to be framed as whether or not you need to assist enhance the mannequin or not, and will at all times be topic to alter. Whereas it’s true that your information can be used to enhance the mannequin, it’s value highlighting that the coaching can be carried out by saving your information.

Knowledge saved for 5 years

One other change to the Client Phrases and Insurance policies is that when you choose in to having your information used, the corporate will retain that information for 5 years. Anthropic justifies the longer time interval as crucial to permit the corporate to make higher mannequin developments and security enhancements.

While you delete a dialog with Claude, Anthropic says it won’t be used for mannequin coaching. When you do not choose in for mannequin coaching, the corporate’s current 30-day information retention interval applies. Once more, this does not apply to Industrial Phrases.

Anthropic additionally shared that customers’ information will not be bought to a 3rd occasion, and that it makes use of instruments to “filter or obfuscate delicate information.”

Knowledge is crucial to how generative AI fashions are skilled, they usually solely get smarter with further information. Consequently, corporations are at all times vying for consumer information to enhance their fashions. For instance, Google only in the near past made an identical transfer, renaming the “Gemini Apps Exercise” to “Maintain Exercise.” When the setting is toggled on, a pattern of your uploads, beginning on Sept. 2, the corporate says will probably be used to “assist enhance Google companies for everybody.”