On November 2, 2022, I attended a Google AI occasion in New York Metropolis. One of many themes was accountable AI. As I listened to executives speak about how they aligned their know-how with human values, I spotted that the malleability of AI fashions was a double-edged sword. Fashions might be tweaked to, say, decrease biases, but in addition to implement a particular standpoint. Governments might demand manipulation to censor unwelcome details and promote propaganda. I envisioned this as one thing that an authoritarian regime like China may make use of. In the US, in fact, the Structure would forestall the federal government from messing with the outputs of AI fashions created by personal firms.

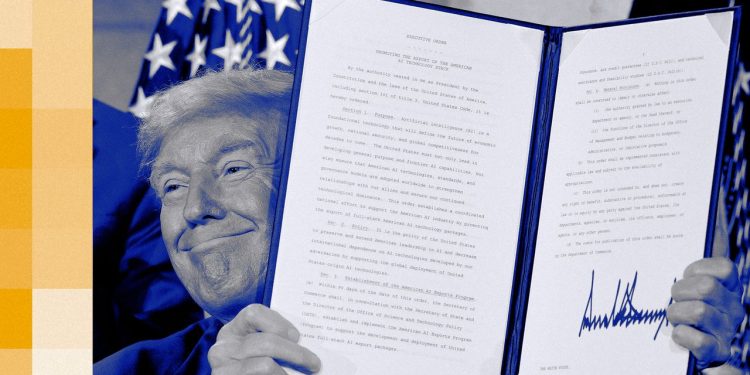

This Wednesday, the Trump administration launched its AI manifesto, a far-ranging motion plan for one of the crucial very important points going through the nation—and even humanity. The plan usually focuses on besting China within the race for AI supremacy. However one a part of it appears extra in sync with China’s playbook. Within the title of fact, the US authorities now needs AI fashions to stick to Donald Trump’s definition of that phrase.

You gained’t discover that intent plainly acknowledged within the 28-page plan. As an alternative it says, “It’s important that these methods be constructed from the bottom up with freedom of speech and expression in thoughts, and that U.S. authorities coverage doesn’t intervene with that goal. We should be sure that free speech prospers within the period of AI and that AI procured by the Federal authorities objectively displays fact reasonably than social engineering agendas.”

That’s all wonderful till the final sentence, which raises the query—fact in line with whom? And what precisely is a “social engineering agenda”? We get a clue about this within the very subsequent paragraph, which instructs the Division of Commerce to take a look at the Biden-era AI guidelines and “get rid of references to misinformation, Range, Fairness, and Inclusion, and local weather change.” (Bizarre uppercase as written within the revealed plan.) Acknowledging local weather change is social engineering? As for fact, in a truth sheet in regards to the plan, the White Home says, “LLMs shall be truthful and prioritize historic accuracy, scientific inquiry, and objectivity.” Sounds good, however this comes from an administration that limits American historical past to “uplifting” interpretations, denies local weather change, and regards Donald Trump’s claims about being America’s biggest president as goal fact. In the meantime, simply this week, Trump’s Fact Social account reposted an AI video of Obama in jail.

In a speech touting the plan in Washington on Wednesday, Trump defined the logic behind the directive: “The American folks don’t need woke Marxist lunacy within the AI fashions,” he mentioned. Then he signed an government order entitled “Stopping Woke AI within the Federal Authorities.” Whereas specifying that the “Federal Authorities must be hesitant to manage the performance of AI fashions within the personal market,” it declares that “within the context of Federal procurement, it has the duty to not procure fashions that sacrifice truthfulness and accuracy to ideological agendas.” Since all the large AI firms are courting authorities contracts, the order seems to be a backdoor effort to make sure that LLMs generally present fealty to the White Home’s interpretation of historical past, sexual identification, and different hot-button points. In case there’s any doubt about what the federal government regards as a violation, the order spends a number of paragraphs demonizing AI that helps variety, calls out racial bias, or values gender equality. Pogo alert—Trump’s government order banning top-down ideological bias is a blatant train in top-down ideological bias.

Marx Insanity

It’s as much as the businesses to find out methods to deal with these calls for. I spoke this week to an OpenAI engineer engaged on mannequin habits who informed me that the corporate already strives for neutrality. In a technical sense, they mentioned, assembly authorities requirements like being anti-woke shouldn’t be an enormous hurdle. However this isn’t a technical dispute: It’s a constitutional one. If firms like Anthropic, OpenAI, or Google determine to attempt minimizing racial bias of their LLMs, or make a aware alternative to make sure the fashions’ responses replicate the hazards of local weather change, the First Modification presumably protects these selections as exercising the “freedom of speech and expression” touted within the AI Motion Plan. A authorities mandate denying authorities contracts to firms exercising that proper is the essence of interference.

You may suppose that the businesses constructing AI would combat again, citing their constitutional rights on this subject. However to this point no Huge Tech firm has publicly objected to the Trump administration’s plan. Google celebrated the White Home’s assist of its pet points, like boosting infrastructure. Anthropic revealed a constructive weblog publish in regards to the plan, although it complained in regards to the White Home’s sudden seeming abandonment of robust export controls earlier this month. OpenAI says it’s already near attaining objectivity. Nothing about asserting their very own freedom of expression.

In on the Motion

The reticence is comprehensible as a result of, total, the AI Motion Plan is a bonanza for AI firms. Whereas the Biden administration mandated scrutiny of Huge Tech, Trump’s plan is a giant fats inexperienced mild for the trade, which it regards as a associate within the nationwide wrestle to beat China. It permits the AI powers to basically blow previous environmental objections when establishing large knowledge facilities. It pledges assist for AI analysis that may circulate to the personal sector. There’s even a provision that limits some federal funds for states that attempt to regulate AI on their very own. That’s a comfort prize for a failed portion of the latest funds invoice that will have banned state regulation for a decade.

For the remainder of us, although, the “anti-woke” order just isn’t so simply dismissed. AI is more and more the medium by which we get our information and data. A founding precept of the US has been the independence of such channels from authorities interference. We now have seen how the present administration has cowed guardian firms of media giants like CBS into apparently compromising their journalistic ideas to favor company targets. Extending this “anti-woke” agenda to AI fashions, it’s not unreasonable to anticipate comparable lodging. Senator Edward Markey has written on to the CEOs of Alphabet, Anthropic, OpenAI, Microsoft, and Meta urging them to combat the order. “The main points and implementation plan for this government order stay unclear,” he writes, “however it’s going to create important monetary incentives for the Huge Tech firms … to make sure their AI chatbots don’t produce speech that will upset the Trump administration.” In an announcement to me, he mentioned, “Republicans need to use the ability of the federal government to make ChatGPT sound like Fox & Pals.”

As you may suspect, this view isn’t shared by the White Home workforce engaged on the AI plan. They consider their objective is true neutrality, and that taxpayers shouldn’t should pay for AI fashions that don’t replicate unbiased fact. Certainly, the plan itself factors a finger at China for example of what occurs when fact is manipulated. It instructs the federal government to look at frontier fashions from the Individuals’s Republic of China to find out “alignment with Chinese language Communist Occasion speaking factors and censorship.” Until the company overlords of AI get some spine, a future analysis of American frontier fashions may nicely reveal lockstep alignment with White Home speaking factors and censorship. However you won’t discover that out by querying an AI mannequin. Too woke.

That is an version of Steven Levy’s Backchannel publication. Learn earlier protection from Steven Levy right here.